4 Strategies to Create a Great Post-Learning Survey

If you have ever attended a training event, you have most certainly been presented with the opportunity to provide feedback to the trainer as the event concluded. More than likely, you have given your own learners an opportunity to provide you with feedback.

As a training professional, you innately understand that the post-learning survey is an important step in the instructional design process. The feedback from learners is intended to help instructional designers identify which activities the learners enjoyed, what concepts or topics they struggled with, and how much they feel they learned. Effective evaluation surveys allow you to analyze areas of the training where improvement is needed and can help you measure the overall effectiveness of your training programs. Post-learning surveys are so valuable that the ANSI/IACET 2018-1 Standard for Continuing Education and Training has an entire category covering the requirements for training providers to have processes for the evaluation of learning events.

However, the problem is, retail and food services businesses have become aggressive about collecting feedback from their customers. In today’s environment, everywhere your learners go and after every encounter, they are asked to complete a survey and provide feedback. Requests for completing surveys has become ubiquitous and the volume of requests for completing surveys has grown exponentially, leading your learners become bored, tired, or uninterested in your survey!

When the course ends and you ask them to complete your post-learning event survey, the attendees sigh and attempt to leave. This “survey fatigue” results in low response rates, abandoned surveys, low data quality, and serious survey bias. So, how do you persuade your learners to complete your survey? By designing great surveys! How do you design great surveys? By following these four strategies:

Strategy 1: Minimize the Goals of the Evaluation

Before creating the survey, make a list of the information you would like to learn from the responses. Some of these might be:

- Gain insight on the effectiveness of the course structure

- Determine if content was appropriate

- Ascertain the quality of the delivery method

- Determine the effectiveness of the course timing

- Detect gaps in the instructor’s abilities

- Get a sense of the usability of the LMS and/or provided materials

- Uncover issues with the learning environment that may impede learning

- Identify gaps in the accessibility of the material

- Discover disparities in the training outcomes versus the learning objectives

These are just a quick handful of legitimate and necessary items that an instructional designer would want to understand in order to improve the course. If he asks one broad question for each goal, he may not get detailed enough information to make any adjustments. If he asks three or four detailed questions for each goal, his survey may become too long, and the learners’ survey fatigue will skew the results.

Instead of trying to collect all the information in one survey presented to all the learners, it may be better to create several surveys that focus on one or two areas each and rotate which survey is given. While no one group of learners will provide feedback on all the areas, a thorough and complete evaluation of the course will be collected from the community of learners.

Strategy #2: Understand the Survey Completers Thought Process

By having a deep understanding of the thought process through which a learner completing a survey travels, you can intentionally minimize barriers at each of these mentally expensive processes. According to cognitive researchers[i], a learner answering a survey question treks through a five-stage process to accomplish the task.

- Respondents must read the question and interpret the question. In this step, the respondent attempts to clarify in his mind exactly what the question is asking.

- Once, he deduces the intent of the question, he reaches back into his memory and retrieves relevant experiences based on his understanding of the question.

- From those memories he forms a provisional judgement of his experience based on his perception of the context of the question.

- Next, he reads and interprets the provided choices and translates the provisional judgement into one of the options.

- Finally, he may revise his response, as necessary, to align with his internal views of what is socially desirable.

As you can see, answering survey questions requires expending quite a bit of mental energy and this process must be repeated for each and every question on the survey! To prevent survey fatigue, designers must deliberately prevent mental fatigue. Once you understand the thought process a learner is facing to complete the survey, you’ll be able to make better design decisions when creating the survey that nudge the learner towards accurate completion rather than abandonment of the survey.

Strategy #3: Choose the Right Types of Questions to Ask

Survey questions can either be open-ended or closed-ended. Open-ended questions simply ask a question and allow for a free-form response from the participants. Close-ended questions, on the other hand, ask a question and provides a constrained set of options from which the survey completer must choose. Of course, neither question type is perfect with each having its trade-offs.

| Open-ended Questions | Close-ended Questions | |

|---|---|---|

| Type of information | More qualitative in nature. | More quantitative in nature |

| When to use | When instructional designers have more vaguely defined goal of the evaluation. | When instructional designers are looking for specific feedback. |

| Burden on Survey Author | Relatively easy to write because there are no response options. | Relatively difficult to write because they must include an appropriate set of response options. |

| Burden on the Respondent | Take more time and effort | Take less time and effort |

| Burden on the Analyst | More difficult to analyze because the responses must be transcribed, coded, and submitted to some form of qualitative analysis, such as content analysis | Easy to analyze because the responses can be easily converted to numbers and entered into a spreadsheet or statistical software. |

| Dependability of Results | More valid and reliable due to less bias of the author. | Less valid and reliable due to authors introducing bias with choices. |

Tip: Avoid using scales with only numerical labels as they introduce too much room for interpretation. Instead, only present respondents with verbal labels that you will convert to numerical values in the analysis.

Strategy #4: Compose Short, Targeted Questions

Once you know the goals of the survey, you can start to craft your questions. Asking long and complex questions requires the learners to expend more concentration resulting in the survey taking too long to complete and accelerating the learner to survey fatigue. Instead, questions should follow the BRUSO model[ii]:

- Brief – Question items should be short and to the point, avoiding long, overly technical, jargon, and unnecessary words. This succinctness means it easier for respondents to interpret the intent of the question and lessens the cognitive load, resulting in a faster completion time.

- Relevant – Question items should be tied to one of the specific goals of the evaluation. If a leaner’s age, gender, marital status, or income is not relevant to the content or learning objectives, then these questions should not be included. Besides making the evaluation form faster to complete, it also circumvents irritating respondents with items they may perceive as threats to their privacy.

Secondly, solicit feedback only on items on which you can make adjustments.For example, if the content of a course is mandated by a regulatory agency, then getting feedback on that content may be irrelevant because the instructional designer cannot change the content.The lessons learned from the feedback should be actionable.

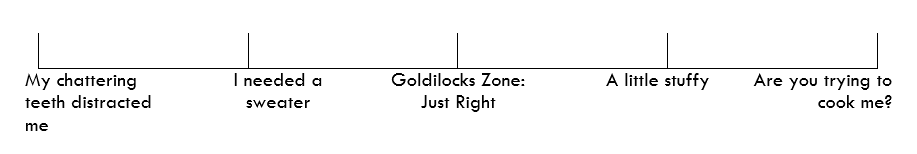

- Unambiguous – Question items should only be able to be interpreted in one way. Using vague adjectives or descriptive language opens the item up to be misconstrued or will require the respondent to expend more mental energy understanding the context. For example, take a question such as “Did you find the temperature of the room adequate?” What does “adequate” mean? The person may have found the room a bit warm, but not too hot. So, the temperature was tolerable, but not ideal. The respondent will spend valuable mental energy trying to decide if they should answer “Yes” or “No.” A more ambiguous way to ascertain the information is using a scale:

ex) Describe the temperature of the room:

Along these same lines, it is important to be clear-cut when developing the response options. If your question asks for a respondent to place themselves in a category, the category options need to be both mutually exclusive and collectively exhaustive. Mutually exclusive means that each option is distinct and does not overlap another option in the list. Collectively exhaustive means that all possibilities are covered in the options.

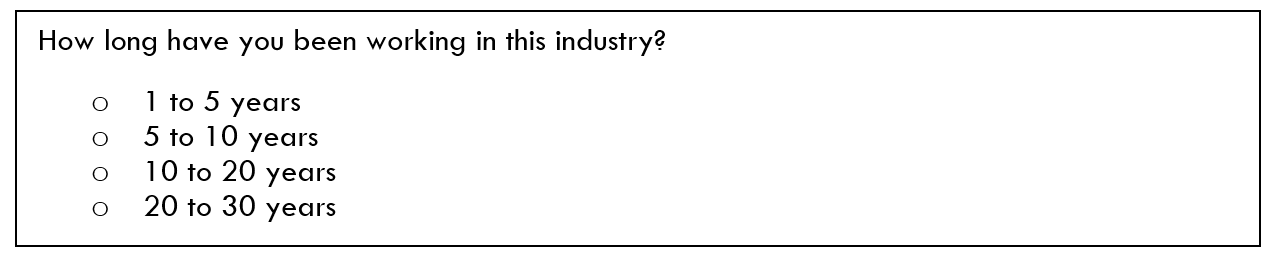

A common error is with timeframes where the previous option’s timeframe ends on the same value that begins the next option’s timeframe. Take a look at this example:

This list of options is not mutually exclusive; a person who has worked 5, 10, or 20 years does not know exactly where to categorize himself. A person who has worked 5 years could either categorize himself in the 1 to 5 years option or in the 5 to 10 years option.

Also, the list is not collectively exhaustive. A person who has worked in the industry for 4 months may be confused because there is no option for under one year. Likewise, a long-time industry veteran with over 30 years of experience would not be able to quickly categorize himself.

To fix this, it would be better to ask:

The possible confusion caused by overlapping timeframes has been removed and the missing timeframes have been added.

- Specific – It should be clear to the learner completing the survey what their response should be about. One way to ensure the questions are specific is to align each question to one, and only one, metric or goal. For example, the question: “Was the content well organized and easy to follow?” is not specific because it measures two related, but different issues. If the learner found the content to be organized, but complicated, he is unsure whether to check “Yes” or “No” and must pick one of the two options. This leads to problems when analyzing the data as well. The instructional designer responding to the feedback might miss the nuance that learners are not struggling with the organization, but with confusing content. If he re-organizes the content, but does not simplify the concepts, he does not actually address the issue.

- Objective – Authors should attempt to ensure that questions are objective in the sense that they do not reveal the instructional designer’s own opinion or lead the participants to answer in a particular way. Conducting a pilot test and asking other people to explain how they interpreted the question is the best way to know how other people will interpret the wording of the question.

Take Away

If you’ve struggled with getting learners to complete the post-learning survey, then hopefully these tips and strategies will help you design a post-learning survey with intentionality that will provide accurate feedback without over-taxing your learners.

[i] Sudman, S., Bradburn, N. M., & Schwarz, N. (1996). Thinking about answers: The application of cognitive processes to survey methodology. San Francisco, CA: Jossey-Bass.

[ii] Peterson, R. A. (2000). Constructing effective questionnaires. Thousand Oaks, CA: Sage.

About the Author

As the Vice President of Technology and Organizational Effectiveness, Randy is responsible for overseeing and implementing the technological solutions necessary to achieve the strategic and operational goals of the Board of Directors. He oversees the development of all web applications, like the public website, the IACET member portal, and the accreditation application submission and review modules.

With over 20 years of experience as a full-stack developer, providing software and IT solutions within the non-profit and government verticals, he is an expert in the design, development, and implementation of membership management systems, focusing on tightly integrating technology platforms for associations.